What makes AI hallucinations such a critical problem today?

If your AI sometimes “sounds confident but wrong,” you’re not alone. Hallucinations erode trust, trigger rework, and stall production rollouts—especially in regulated industries or customer-facing workflows. The good news: Automated Reasoning (AR) checks are now available in Guardrails, bringing a new level of mathematical verification to your AI responses and delivering up to 99% verification accuracy on policy compliance.

In plain terms: instead of relying on a model’s best guess, AR checks translate your rules into logic and use constraint solvers to prove whether an answer is compliant before it ever reaches a customer or agent.

This post breaks down what AR checks are, how they work, where they shine, and how Data Sleek helps you implement them quickly and safely across contact centers, knowledge bots, and internal assistants.

What are Automated Reasoning checks in Guardrails?

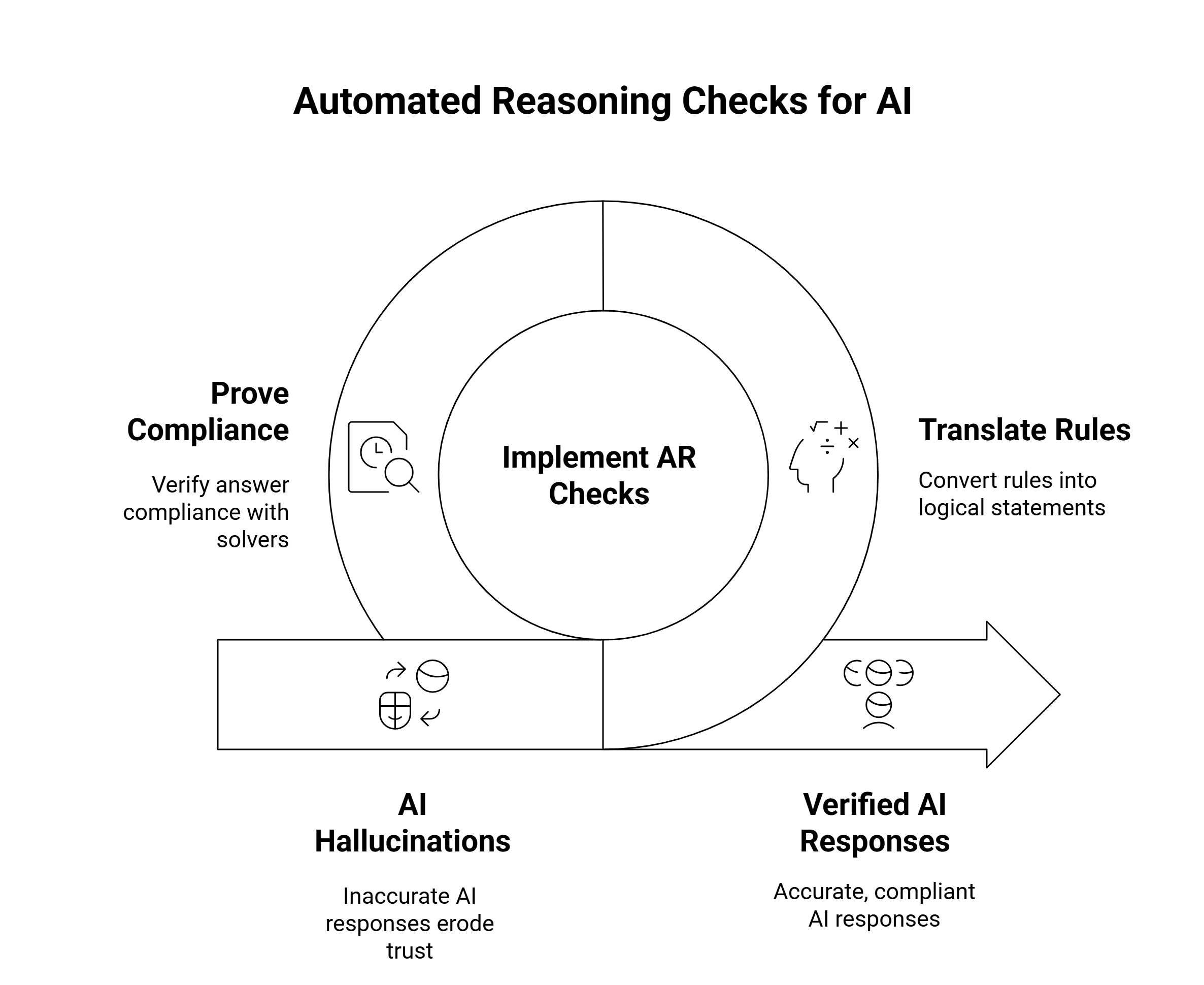

Think of Guardrails as your AI’s safety filter. You define what’s allowed (and what isn’t), and Guardrails enforces it. Automated Reasoning checks take that one step further by applying formal logic to decisions.

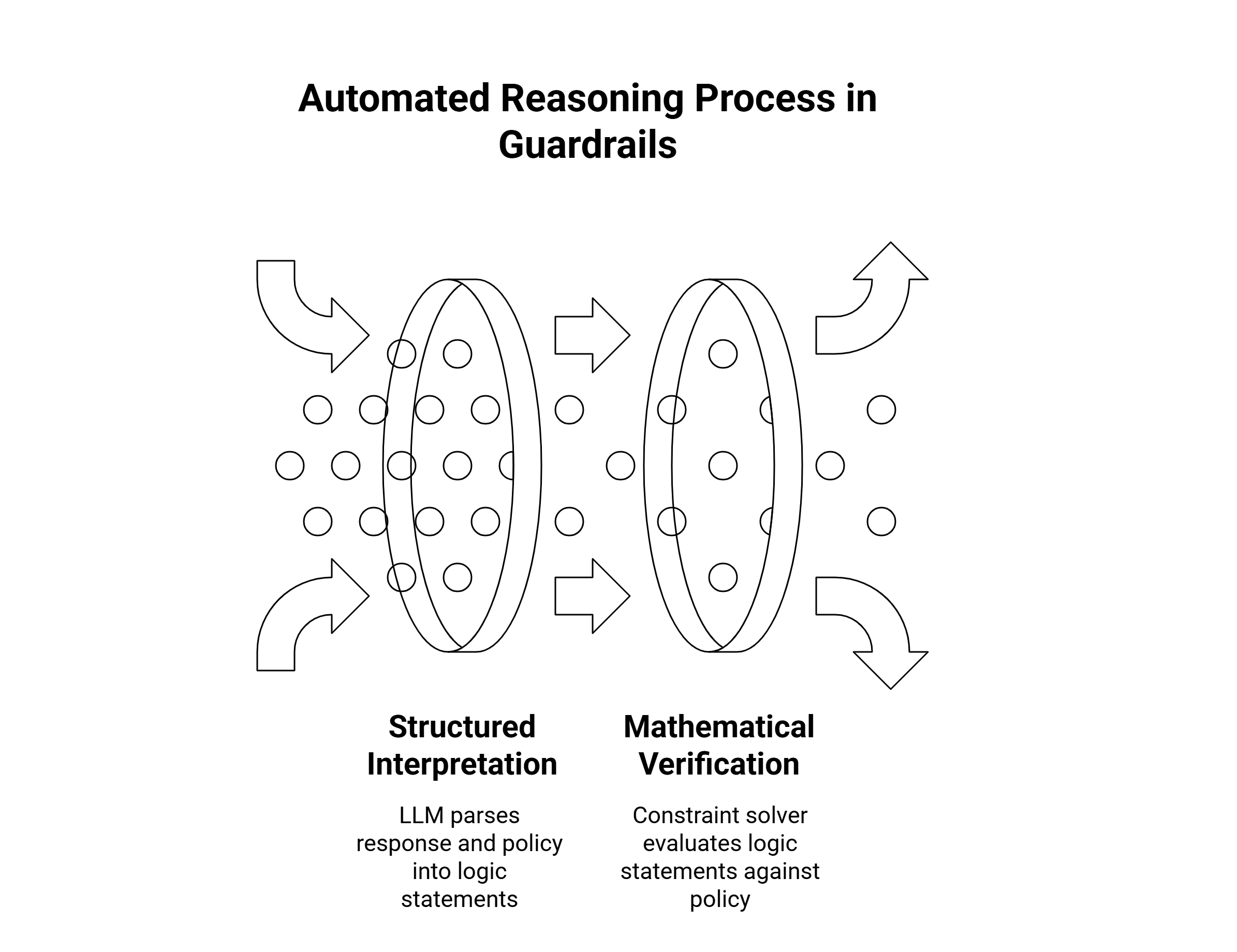

How it works (the two-stage approach)

Structured interpretation: An LLM parses the model’s draft response and your policy into a structured, machine-checkable form (think: logic statements).

Mathematical verification: A constraint solver evaluates those statements to prove whether the response satisfies your policy. If it fails, Guardrails can block, redact, or request a safer reformulation.

Policies you can verify with AR

PII handling: “No account numbers or SSNs in any outbound text.”

Factual constraints: “Order total must equal sum of line items + tax.”

Business rules: “Refunds over $500 require escalation.”

Safety/compliance: “No medical diagnosis; provide approved triage steps only.”

Brand/lexicon: “Use approved product names; avoid prohibited terms.”

Because AR checks are logic-driven, they’re explainable: you can see exactly which rule a response violated and why.

Why AR checks change the game

Trust & safety: Prevent high-impact mistakes (bad advice, policy violations, leaked data) before they happen.

Consistency at scale: The same rules fire every time—no fatigue, no drift.

Lower review costs: Fewer manual audits; reviewers focus only on flagged edge cases.

Faster time-to-production: Replace “pilot purgatory” with provable controls your risk teams can sign off on.

Measurable accuracy: With up to 99% verification accuracy on rule checks, you can track compliance as a KPI, not a hope.

Where to use AR checks (with real impact)

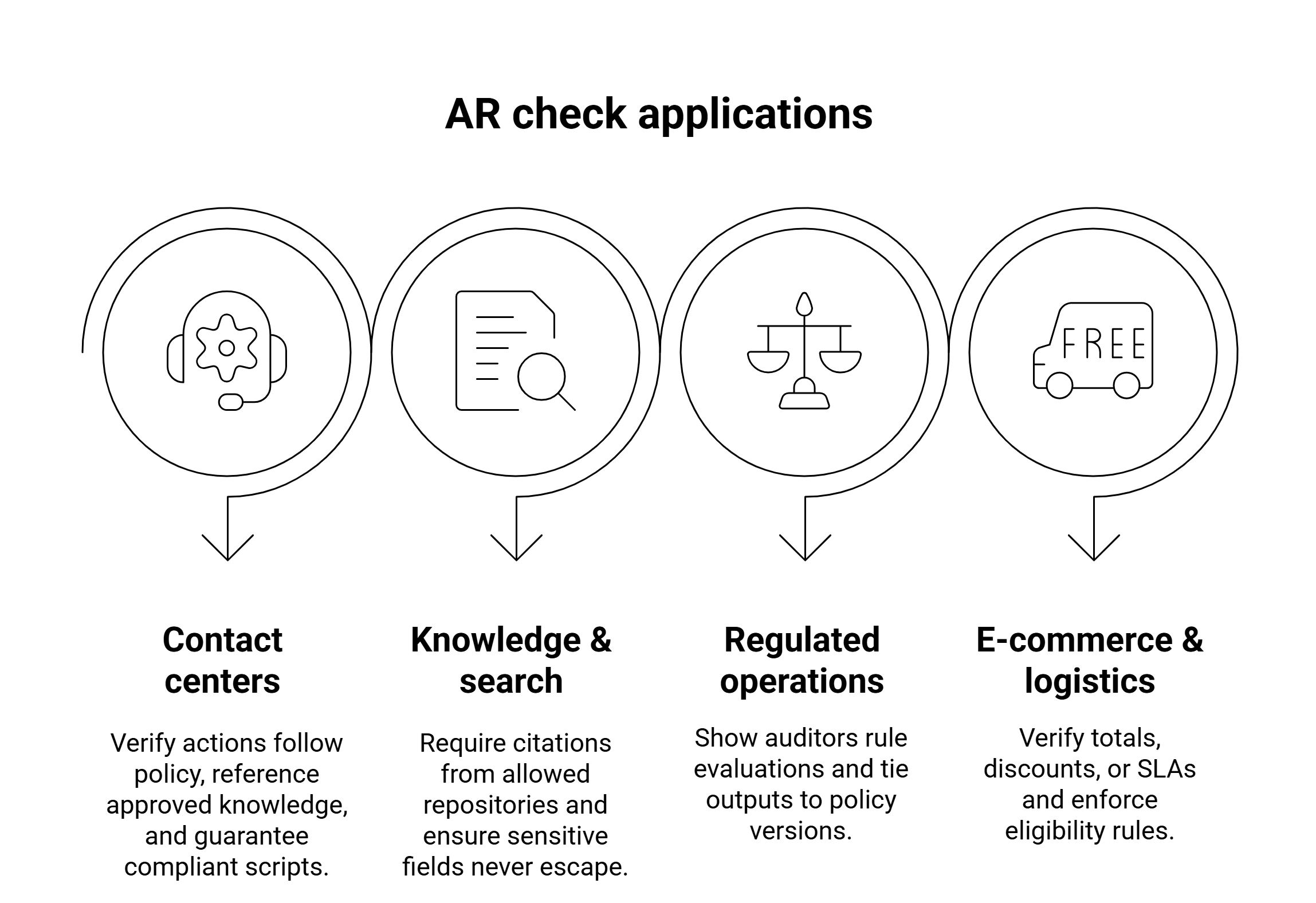

Contact centers (Amazon Connect)

Agent Assist: Verify that suggested actions follow policy (e.g., no refunds over $X, no PII in chat macros).

Self-service flows: Prove that IVR/chat answers only reference approved knowledge or match order/account facts.

Outbound campaigns: Guarantee compliant scripts for regulated industries and regions.

Knowledge & search (Amazon Q Business)

Source-bound answers: Require citations from allowed repositories; block content from untrusted sources.

Redaction on the fly: Ensure sensitive fields never escape retrieval-augmented generation (RAG).

Regulated operations (finance, healthcare, insurance)

Explainability: Show auditors the exact rule evaluations leading to allow/block decisions.

Policy versioning: Tie outputs to policy versions for clean audit trails.

E-commerce & logistics

Math correctness: Verify totals, discounts, or SLAs.

Eligibility rules: Enforce return windows, subscription tiers, or region-based constraints.

Reference architecture: where AR checks fit

Channel apps: Amazon Connect (voice, chat, tasks), web chat, mobile apps.

Orchestration: Lambda/Step Functions to route prompts and apply Guardrails.

Models: Bedrock-hosted FMs (for generation + the “interpretation” stage).

Guardrails with AR checks: Enforce policy before responses return to users.

Observability: CloudWatch metrics/logs; EventBridge for alerts; S3 for archived traces.

Security: KMS for keys; IAM boundaries; VPC endpoints for private data paths.

Governance: Versioned policy store (S3/DynamoDB) mapped to environments (dev/test/prod).

Step-by-step: implement AR checks (fast)

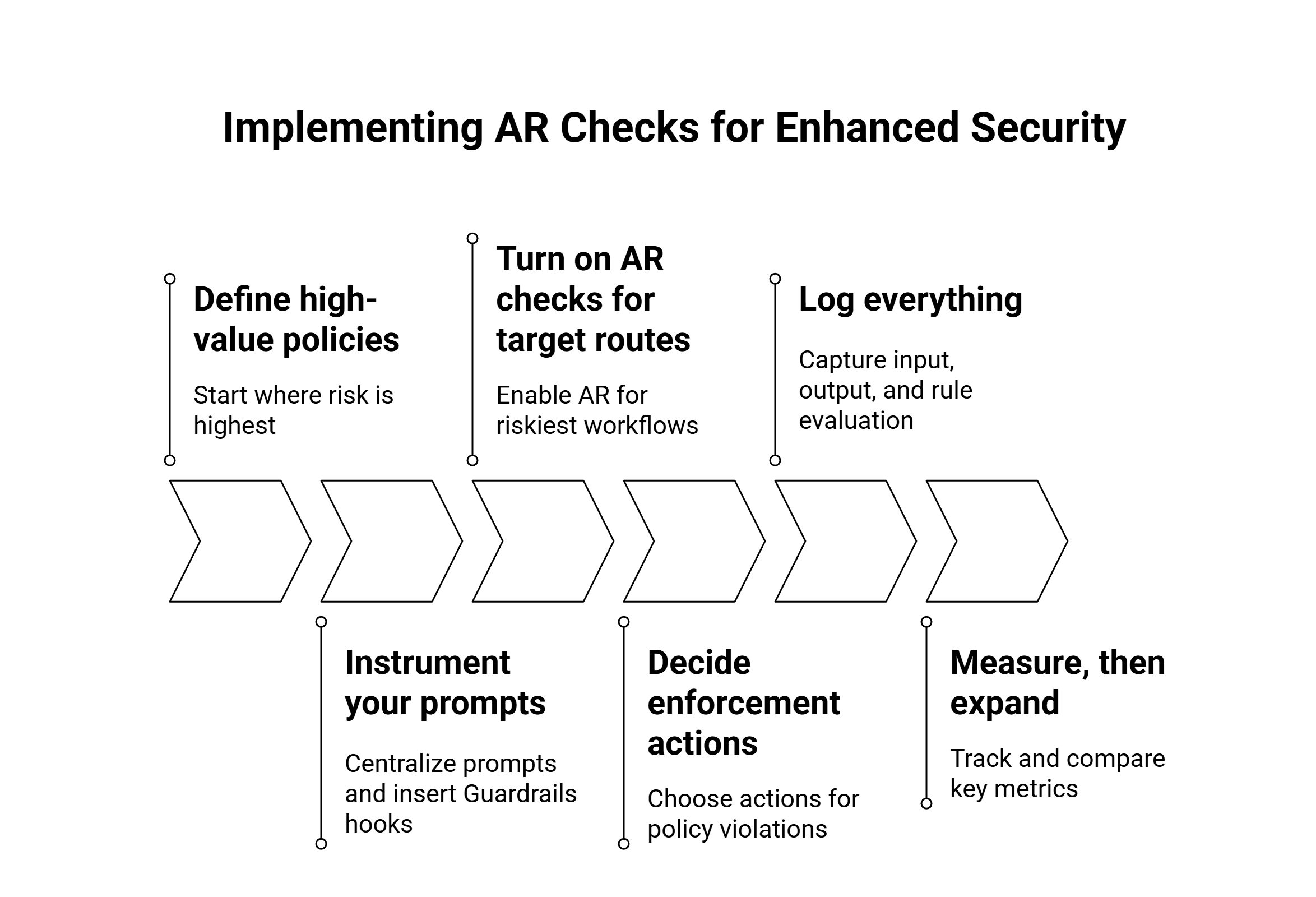

Define high-value policies

Start where risk is highest (PII leakage, regulatory claims, money movement). Write rules as clear, testable statements.Instrument your prompts

Centralize prompts (Prompt Registry) and insert Guardrails hooks in your generation pipeline—before the response hits a user.Turn on AR checks for target routes

Enable AR for the riskiest workflows first: refund guidance, compliance answers, identity flows, outbound scripts.Decide enforcement actions

For each policy: allow, block, request reformulation, redact, or route to human. Keep a safe fallback for critical paths.Log everything

Capture input, draft output, rule evaluation, and final response. Ship to CloudWatch/S3 for analytics, audits, and training.Measure, then expand

Track baseline hallucination/compliance rates for a week. After enabling AR checks, compare: violation rate, time-to-resolution, reviewer effort.

What to measure (and why)

Violation rate (pre vs. post): % of outputs failing policy checks.

Human override rate: Flags that needed manual intervention.

First-contact resolution / CSAT: Safety without hurting experience.

AHT impact: For Agent Assist, measure time saved per interaction.

Cost per verified output: Show savings from fewer reviews/escalations.

Audit readiness: % of responses with complete, queryable proof trails.

Tip: put these in a lightweight Quicksight or Grafana dashboard so Risk, CX, and Engineering share the same view.

Known limits (and how to manage them)

Policy coverage: AR checks are only as strong as the rules you write. Start with your “must-not” list.

False positives: Over-strict rules can block harmless content. Pilot with shadow mode to tune thresholds.

Domain drift: New products, prices, or laws? Tie rules to versioned policies and include expiry checks.

Latency tradeoffs: Formal checks add milliseconds. Use async reformulation or pre-approved snippets where speed is critical.

What role does Data Sleek play in optimizing AI with Automated Reasoning?

You don’t just need a feature—you need a production-ready safety program. Here’s how Data Sleek gets you there:

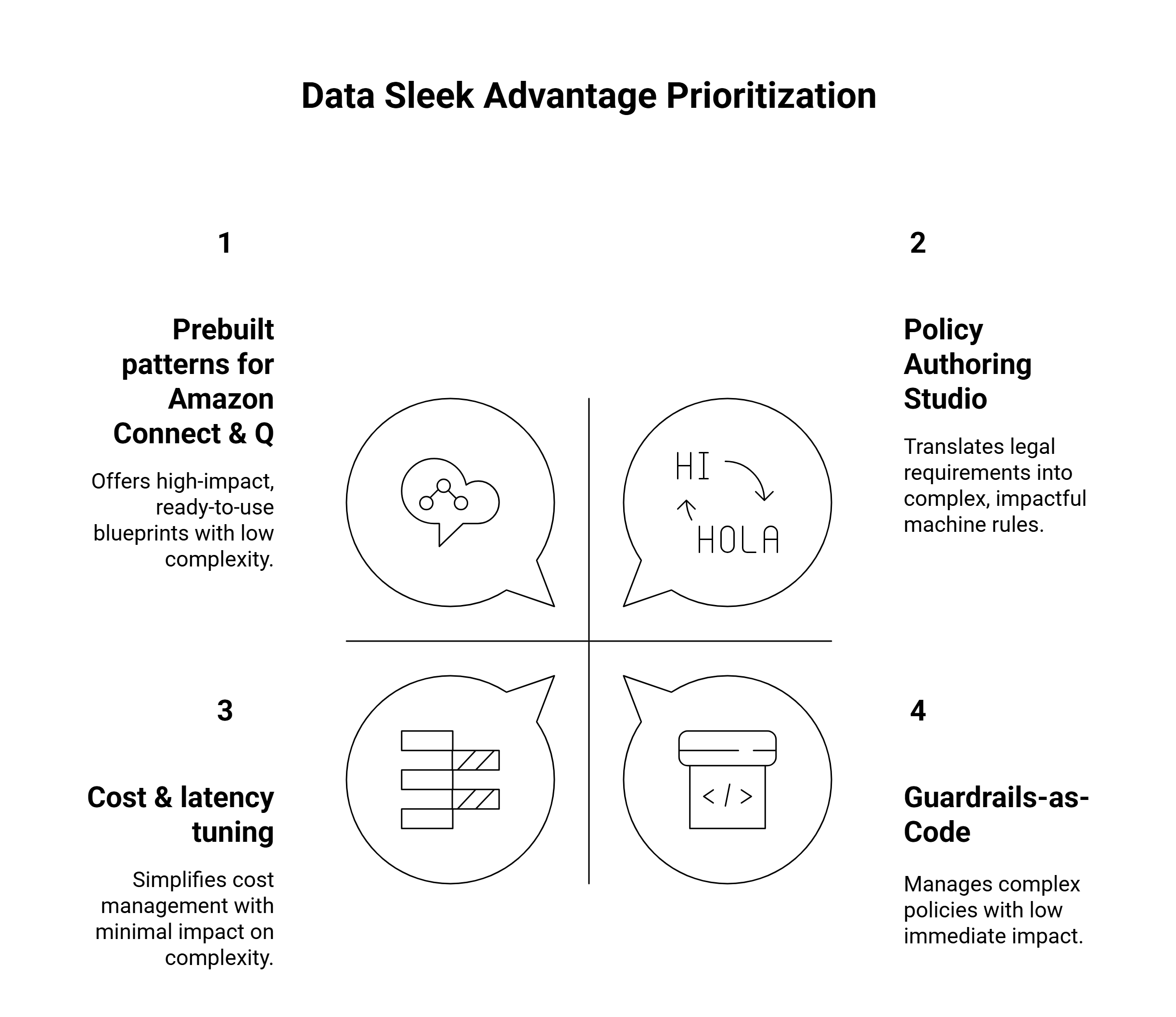

Policy Authoring Studio

We translate legal/compliance requirements into machine-checkable rules and map them to the exact prompts and workflows that matter.Guardrails-as-Code

Version-controlled policies (Git + IaC) across dev/test/prod with CI/CD, automated tests, and drift detection—no more “mystery settings” in consoles.Prebuilt patterns for Amazon Connect & Q

Drop-in blueprints for Agent Assist, self-service flows, and knowledge bots that already include AR checks, PII redaction, citation enforcement, and safe fallbacks.Observability & Audit Packs

Out-of-the-box dashboards (CloudWatch/Quicksight), S3 evidence trails, and exportable audit reports for your risk and security teams.Cost & latency tuning

We right-size model calls, batch verifications where safe, and use caching/grounded snippets to keep costs in check and experiences snappy.Enablement, not just enablement

Workshops for CX, Legal, and Engineering so everyone understands what is enforced, why, and how to evolve policies over time.Time-to-value

Most clients see their first high-risk workflow protected in weeks, not quarters—then expand safely from there.

Ready to trust your AI—at scale?

Automated Reasoning checks make safety provable, not probabilistic. Combine them with good prompt design and grounded data, and you’ve got an AI stack your customers, agents, and auditors can trust.

Data Sleek helps you go from “we should” to “we did”—fast:

Identify high-value policies

Stand up Guardrails with AR checks

Integrate with Amazon Connect and Amazon Q

Ship dashboards and audit trails your leaders can rely on

Let’s de-risk your GenAI roadmap.

Visit datasleek.com to schedule a workshop or request a quick feasibility review of your top workflows.